UX Certification

Get hands-on practice in all the key areas of UX and prepare for the BCS Foundation Certificate.

There are many findings in psychology that are of general interest to user researchers. But here are 10 that have particularly influenced the way I go about my day-to-day work.

There are many obvious sources of bias when carrying out user research. For example, most people are aware of the need to avoid leading questions and to avoid 'selling' participants on the design. But psychology also teaches us that there are many subtle areas where bias can creep into a study. This might take the form of writing down what you thought the participant meant rather than what she actually said. Or during a usability test, using phrases like "Good" or "That's right" when the participant uses the system in a particular way. Or failing to randomise the tasks you ask people to carry out, to control for the fact that participants will approach later tasks in a usability test with more knowledge of the system than with earlier tasks.

Psychologists have been wrestling with the issue of experimental design for some time and every user researcher needs a basic grounding on what to do and what to avoid. For more detail, read Philip Hodgson's article on controlling experimenter effects and for even more detail, try Critical Thinking About Research: Psychology and Related Fields by Julian Meltzoff.

Expert reviews are a great way to find usability bloopers with your product. We have even created a 247-item checklist to help guide an expert review of web sites. However, expert judgement should never be your own source of data. Psychological studies frequently show the limitations of expert judgement. For example, research shows that political pundits are no better than a coin flip in determining the result of an election and wine tasters don't know if they are smelling red or white wine. Part of the the problem is that, in the same way that most people think they are better than the average driver, most experts believe they are better than the average expert. As a consequence, they are lulled into a false sense of security about their own ability. So by all means apply your expertise to make judgments about a design, but be sure to validate these judgements with data.

It's not always easy to be conclusive in user research, but thanks to psychology there are at least two common questions asked of user researchers where we can make predictions with some degree of certainty.

The first question is about the number of choices in a user interface. Next time you are faced with a cluttered UI design that offers so many choices that it looks like a menu in a Chinese restaurant, you should invoke Hick's Law (named after psychologist William Edmund Hick). This states that the time taken to make a decision increases as the number of choices is expanded. It predicts that the greater the number of alternative choices, the longer it will take a user to make a decision and select the correct one.

The second question is about the size and location of controls. According to Fitts' law (named after the psychologist Paul M Fitts), the time required to rapidly move to a target is a function of the distance to and the size of the target. This applies to both desktop user interfaces (stepper controls anyone?) as well as touch screen interfaces that sometimes feel like they have been designed for people who have talons for fingers. In practice, Fitts' Law doesn't mean you should just make big buttons but that you should increase the clickable area. For example, allow a user to click on the text label next to a form field to place their cursor in the field for data entry.

We like to think that our decisions are rational and made after conscious deliberation. That's why it's so tempting to believe participants know why they behave in a particular way. But people are poor at introspecting into the reasons for their behaviour.

One of my favourite research studies proving this was carried out forty years ago by psychologists Richard Nisbett and Timothy Wilson. The researchers set up a table outside a store with a sign that read, "Consumer Evaluation Survey: Which is the best quality?" On the table were four pairs of ladies’ stockings, labelled A, B, C and D from left to right. Most people (40%) preferred D, and fewest people (12%) preferred A.

In fact, all the pairs of stockings were identical. The reason most people preferred D was simply a position effect: the researchers knew that people show a marked preference for items on the right side of a display (another finding from psychology). But when the researchers asked people why they preferred the stockings that they chose, people identified an attribute of their preferred pair, such as its superior knit, sheerness or elasticity. The researchers even asked people if they may have been influenced by the order of the items, but with just one exception (a psychology student who had just learnt about order effects) nobody thought this had affected their choice. Instead, people made up plausible reasons for their choice.

To read about hundreds of other studies in psychology that tell the same story, read Strangers to Ourselves: Discovering the Adaptive Unconscious by Timothy D Wilson.

In practice, this means that user interviews should never be your only source of data. Good user researchers don't just ask: they watch. You want to observe people because what people say does not always match what they do.

Eye tracking is compelling technology and makes a user researcher feel like a proper scientist. It can be invaluable to answer highly specific questions about a design (such as whether your form label should be to the left or above a form field). But with user interface evaluation, there is very little that you'll discover from eye tracking that you can't discover more easily (and much more cheaply) by observing your users' behaviour.

There are at least two issues with eye tracking that have been known in psychology for many years but still appear to be news to user researchers. The first is that eye tracking measures where the eye is pointing, not necessarily where your attention is focussed. These are not necessarily the same. My wife refers to this as Male Pattern Fridge Blindness when I'm unable to find the jam in the fridge even when I'm staring directly at it. The point is that what we see does not always correlate with what we are attending to.

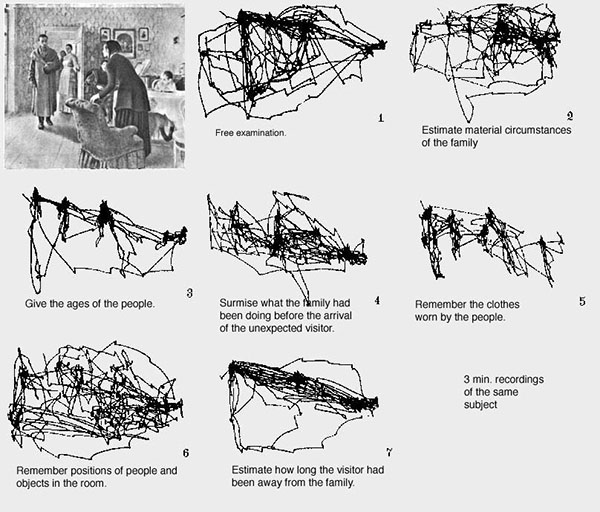

The second finding is that the way we scan a scene depends on the task we ask people to do. Russian psychologist Alfred Yarbus first noted this as long ago as 1967. The image below shows the different gaze paths of a person asked to do seven different tasks with the same picture. Compare the gaze path in task 3 (where the participant is trying to estimate the ages of the people in the picture) with task 6 (where people are trying to memorise the position of objects in the picture). This means you can't simply present a gaze path as evidence of 'what stands out' to your users.

Images from an eye tracking study carried out by Alfred Yarbus. Image from Wikipedia.

A fine book on this is Eye Tracking the User Experience by Aga Bojko. Although the author doesn't discuss the Yarbus study, she does do a great job of describing when and where eye tracking is useful.

In the previous section, I pointed out that psychologists have shown that we can look at one thing but our attention can be elsewhere. Here's a related, but contrasting, finding: we can get so focused on a task that we often miss the obvious just outside our area of attention. If you've not tried this selective attention test on YouTube by psychologist Daniel Simons, try it before reading on:

This effect is known as inattention blindness and it's one of the reasons people miss things in a user interface when they are carrying out specific tasks. It's a dramatic demonstration of how attention affects our perception.

This has huge implications for user research. For example, when moderating a usability test it's very important not to begin by giving your participant a tour of the user interface. If the thing isn't seen when doing a task, you need to discover that as part of your testing. Priming people on the features and functions in the system means you'll fail to discover what people miss.

A survey in 2011 showed that 63% of Americans believed that our memory is a bit like a video camera. Presumably, these people thought of our brain as laying down a continual record of the world. People with good memories have easy access to this record and people with bad memories struggle to replay it.

In fact, our memory is both selective and fallible. Psychologist Elizabeth Loftus proved this with her 'Lost in The Mall' studies, where she showed that people could be made to believe and then embellish a totally false but plausible event (getting lost in a shopping mall as a child).

In practice, this means that asking people about their past behaviour could result in data that contains 'confabulation': made up stories that people believe are true but that never happened. People have a need to tell good stories and so they will make up what happened at certain points rather than leave gaps in their narrative. This is almost impossible to protect against when running user interviews and is a further argument for why you should complement interviews with observation.

Most people know the basics of dealing with quantitative data. Give them a few columns of numbers, and they know how to use a spreadsheet package to calculate the mean and the standard deviation. This works well for summative usability test data, where we have measures of time on task and completion rate, and for survey data. But analysing qualitative data from a field visit or analysing usability problems from a usability test require a different approach. This is because qualitative research data cannot be meaningfully expressed numerically. For example, what does it mean to describe the 'average experience' of different people using a Government service to apply for a driving license? Or how would you analyse usability test data to discover the 'average' usability problem?

Qualitative research is about generating deep insights, it's not about making predictions about the population as a whole. Fortunately, psychologists have been dealing with these kinds of data for many years. The basic approach is as follows:

A recent finding in psychology is that a number of researchers don't know how to do statistics properly. The full story is complex, but the practitioner takeaway is that you can't cherry pick your results.

For example, imagine that you gave the System Usability Scale to participants at the end of a remote, unmoderated usability test where you are comparing two different web-based prototypes. 100 people use version 1 of the prototype and 100 different people use version 2.

The correct way of analysing these data is to calculate a single SUS score for each participant, and then carry out a statistical test where you compare the 100 participant scores for version 1 against the 100 participant scores for version 2.

Another way of analysing the data, which is deeply flawed, would be to separately analyse each question across your 100 participants. For example, you could look for a statistical difference on Q1, then Q2 and so on. Because the SUS has 10 questions, this gives you 10 chances to find a statistical difference (rather than the one chance you have with a single SUS score). In fact, using this approach, you would expect 1 of the 10 questions to show a significant difference with a probability of 0.1. This approach is known as 'p-hacking' because you are trawling through your data looking for statistical differences that you will then justify after the fact.

Did I miss your favourite psychological finding that user researchers should know about? If so, let me know in the comments.

Dr. David Travis (@userfocus) has been carrying out ethnographic field research and running product usability tests since 1989. He has published three books on user experience including Think Like a UX Researcher. If you like his articles, you might enjoy his free online user experience course.

Gain hands-on practice in all the key areas of UX while you prepare for the BCS Foundation Certificate in User Experience. More details

This article is tagged ethnography, fitts' law, heuristic evaluation, moderating, usability testing.

Our most recent videos

Our most recent articles

Let us help you create great customer experiences.

We run regular training courses in usability and UX.

Join our community of UX professionals who get their user experience training from Userfocus. See our curriculum.

copyright © Userfocus 2021.

Get hands-on practice in all the key areas of UX and prepare for the BCS Foundation Certificate.

We can tailor our user research and design courses to address the specific issues facing your development team.

Users don't always know what they want and their opinions can be unreliable — so we help you get behind your users' behaviour.