Over the last few years we've seen a quiet revolution in user experience research. Participants no longer need to come to a usability lab. Nowadays, we can carry out many user research activities over the web. Although we welcome this change

(and have even developed our own remote usability testing tool), user experience research is fundamentally straightforward. There's a lot you can do with the

simplest of tools.

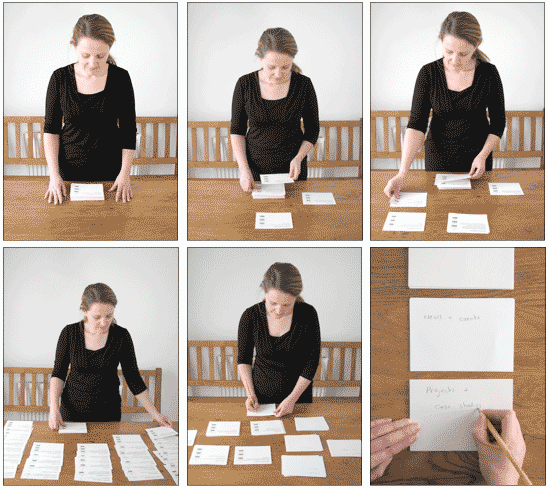

Exploratory card sorting

Many people are familiar with card sorting as a method of finding out how your users classify the information in your domain. You need this information to create the menuing system for your application or the information architecture

for your web site. Figure 1 shows a card sort in progress.

How to carry out exploratory card sorting

- Prepare a stack of cards. Each card contains a title (usually the title of a function or a page within your web site). In my card sorts, I also include a short description of what that function is used for (this is optional but helps avoid simple keyword matching).

Card sorting works well when you have more than 30 and less than 100 cards. See Figure 2 for an example of a printed card.

- Ask around 15 users to sort the cards into groups that make sense to them.

- Once the cards have been sorted into groups, give each user a stack of blank cards. For each group, users take a blank card and write on the card a description of what makes that group a group (these will become the proto-headings of your

navigation structure).

- Analyse the data to (a) find out the cards that users place together, (b) the terms people use to describe the groups of cards, (c) cards that appear in multiple categories and (d) how the results compare with the existing (or proposed) design.

Software exists to make the analysis easier — I'd recommend SynCaps, version 1 of which is now a free download.

The process I've just described is called an "open" card sort (for much more detail, I'd recommend Donna Spencer's book on card sorting). You can also carry out a closed card sort where

you give people the group headings and ask them to put the individual cards in each group. This tests the quality of the taxonomy you have created.

But if you're interested in where in the navigation structure people would go to complete a task, you should use a tree test.

Tree test

Use a tree test to check if people can actually find stuff in your navigation structure.

How to carry out a tree test

- Prepare a stack of around 10-20 cards. Each card contains a short description of a likely goal that your web site supports. For example, "Find an exercise bike" might be common task for a web site that sells fitness products. Tasks where

people are asked to find stuff work well with a tree sort.

- Prepare a set of group headings — these will be the headings from your existing navigation structure or derived from an open card sort. Then, on the back of each card, write down the sub-groups that appear in that group. For example,

on one side of the card, you might have the group heading, "Sports & Leisure". On the reverse of the card, you might have the sub-groups, "Fitness", "Camping & Hiking", "Cycling," etc.

- Lay out the group headings in front of the user in a grid. Give users the task cards. Ask them to pick the group card they would use to start that task. Then turn over the group card and ask them to pick the sub-group they would use to complete

that task.

- Analyse the data to find out the percentage of users that choose the correct option for each task. For more detail on tree testing, try this article by Dave O'Brien.

Trigger word elicitation

Use trigger word elicitation to identify the words or phrases that will encourage users to click on links. You need to identify trigger words because users arrive at

a page on your web site, hunt around for a link that seems the best fit to their goal and then they click on it — and if you site doesn't contain the trigger word, they hit the back button.

How to carry out a trigger word analysis

- Prepare a stack of around 10-20 task cards, as with the Tree Test. Each card contains a short description of a likely goal that your web site supports. For example, "Book a romantic meal for two" might be common task for a restaurant web

site close to Valentine's Day.

- Ask users the words or phrases they would expect to find on a web site that supported that task. For example, you might get phrases like, "Book a table", "Make a reservation" and "Put your name down".

- Analyse the data to identify the common words used for each task. Make sure your web site contains those words to direct users towards the appropriate content.

Web board

Use a web board to find out where users expect to find your functions. This works well for a web page where navigation items appear in headers, footers and page margins as well as the more conventional menus. You need these

data because if your functions appear where people expect, they will find them more quickly. The Software Usability Research Laboratory have published a case study using a similar technique.

How to use a web board

- Take a screen shot of a blank browser page. Open the image in your favourite drawing application and overlay a 5 x 5 grid on the page (Figure 3 shows an example). You want the print out to be as large as possible, so print it on A3 paper

or use a photocopier to scale up an A4 or Letter-sized page to A3.

- Prepare a stack of cards. Each card contains a title of the function or link and a short description of what that function is used for (as with an card sort).

- Tell users that the browser page represents a page from your web site. Ask users to place each card on the grid in the approximate location where they would expect to find it. For example, you may find that users place a 'Back to home' card

in the top left square, and a 'Sign into your account' card in the top right square

- Using the letters and numbers on the grid, note down where users expect each function to appear. Analyse the data to identify areas of agreement across participants. Make sure your functions and links are placed in these locations.

Download the web board template as a pdf

Function familiarity test

Use a function familiarity test to gauge how often people use functions within your application. You need this information to discriminate frequently used functions from infrequently used functions.

How to carry out a function familiarity test

- Prepare a stack of cards. Each card contains a title of the function and a short description of what that function is used for. For example, for a mobile phone you might have "Address book", "Ring tones", "Network", etc.

- Ask users to sort each card into three piles: functions I use frequently; functions I use sometimes; and functions I rarely or never use. (This is easy to adapt: for example, you could change the test to measure levels of understanding by

naming the piles, "Functions I know how to use", "Functions I can use by muddling through" and "Functions I don't understand").

- If a function appears in the "use frequently" pile, give it 5 points. If it appears in the "use sometimes" pile, give it 2 points. After you've tested all participants, add up the scores to identify the most used functions

in your interface.

Swap Sort

Use a swap sort to find out the most important functions, features or tasks within your interface. You need this information to know how to prioritise content.

How to carry out a swap sort

- Prepare a stack of cards. Each card contains a title of the function, feature or task and a short description of it.

- Ask users to read through the cards and fish out the 10 most important cards for them. Put the other cards to one side — you won't be using them anymore.

- Ask users to place the 10 "most important" cards vertically in front of them. Ask users to rank order the cards by swapping adjacent cards, putting the most important ones higher up in the list, until no more can be swapped.

- Give the card placed first in the list 10 points, the card placed second in the list 9 points and so on — so the card placed last in the list gets 1 point. After you've tested all your users, total the points for each card and use

the results to identify the most important functions in your interface.

Acknowledgements

Thanks to Gret Higgins, Philip Hodgson and William Hudson for comments on this article.